Annamária Erdei, Irma Kunnari & Marja Laurikainen

In this article, we present our approach in evaluating the impacts of the Empowering Eportfolio Process (EEP) project, implemented by six higher education institutions (HEIs) in five European countries. Häme University of Applied Sciences (HAMK), from Finland, operated as the coordinator with partners from Belgium (Katholic University of Leuven, KU Leuven; and University College Leuven Limburg, UCLL), Denmark (VIA University College), Ireland (Marino Institute of Education, MIE) and Portugal (Polytechnic Institute of Setubal, IPS). HAMK was also responsible for organizing the impact evaluation.The aim of the EEP project (2016‒2018) was to develop student-centred and competence-based higher education by focusing on assessment and guidance practices and by building an empowering and dynamic approach to the ePortfolio process. The starting point of all the partners was analysed at the beginning of the project (see Kunnari & Laurikainen 2017) and the common development targets were further specified. The central focus was to emphasize the ownership and the role of students in creating their own ePortfolios to use as workspaces in their learning processes and showcases when looking for employment (Barret 2010; Kunnari & Laurikainen 2017, 7). To reach this goal, different levels were identified important to reach students, teachers/study counsellors and the higher education organization. Furthermore, the project aimed at capturing the voice of the world of work. During the project, all these perspectives were analysed by each partner in their own contexts and the outcomes published in the following four article collections: 1) Collection on Engaging practices on ePortfolio Process (Kunnari & Laurikainen 2017a), 2) Students’ perspectives on ePortfolios (Kunnari & Laurikainen 2017b) 3) Employers’ perspectives on ePortfolios (Laurikainen & Kunnari 2018) and 4) Higher education perspectives on ePortfolios (Kunnari 2018), all available at unlimited.hamk.fi. A more detailed description of the EEP project is described in the introduction article.

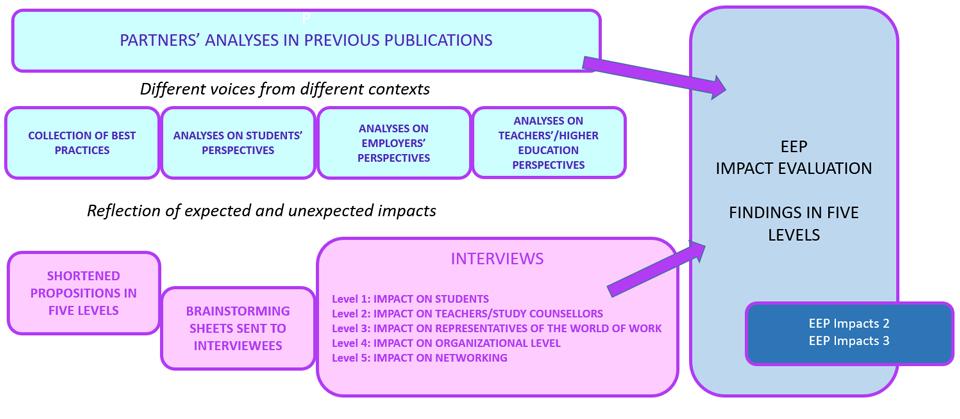

In the final phase of the EEP project, the expected and unexpected impacts of the project were experienced as important to evaluate. In this article, we describe how the evaluation model was created and how the evaluation was implemented in building understanding about the various impacts. We also present some general findings and observations related to the impact evaluation, but the more detailed findings of the impacts are presented in two other articles; EEP Impacts 2 – Students’, teachers’, and employers’ perspectives and EEP Impacts 3 – organizational and networking perspectives. The main question in our impact evaluation was: How did the partners experience the impacts of the EEP project? There is further discussion on the benefits of impact evaluation at the end of this article.

Developing the impact evaluation model for EEP

Our main approach in impact evaluation is following the principles of practice-based research or a practitioner research, which refers to ‘workplace research or development work within a professional field, that is carried out by practitioners, who are personally involved with the professional practices, actions and activities of the field’ (Heikkinen, de Jong, & Vanderlinde 2016). In this impact evaluation, we wanted to focus on practitioners’ reflections, such as partners’ reflections of their own development activities and the voices of students, teachers and work life representatives.

After extensively researching literature on ePortfolios and various educational and impact evaluation models, a special impact evaluation model was developed to specifically measure the impact of the EEP project. The first author of this article was mainly responsible of creating the model and the second author represented the project coordinator’s point of view. Furthermore, the requirements of the financing institution were discussed and considered.

Various educational and impact analysis models provided useful insights for the development of the EEP impact evaluation model. As Nuutinen, Mälkki, Huutoniemi & Törnroos (2016, 4, 43, 50) highlight, research results, inventions, methods or other outputs can be transferred outside the scientific community, which is also an important goal in Erasmus development projects. For example, researchers and developers cooperate, discuss and share information outside their community, e.g. with school programmes, organizations or other professionals. The first condition of having an impact is that the information created by the project would move outside the community through three main channels: a) experts, b) cooperation and other communication forms, and c) direct benefits from scientific results. These conditions were reflected when constructing the EEP evaluation model. Various aspects and parts of the Logical Framework Matrix, LFM (Rasimus 2011, 21–26, 28–34) were also considered for evaluation with special focus on expected results and outputs, actions, resources, tools for realization and indicators. The LFM was a tool created to define the structure and activities of a project that can be used also for the evaluation phase. The primary focus in our evaluation was on changes and impacts, following Tykkyläinen’s (2017, 5, 13) impact evaluation model. Her model is based on a process, in which inputs, outputs, outcomes play important roles. However, the main emphasis is on evaluating the final stage, which is impacts. Among indicators, expert experiences, narratives, stories and case studies received special attention because they are powerful indicators (Nuutinen et al. 2016, 35). Finally, the importance of the human spirit behind evaluation was also considered, embracing the idea that learning behaviour should be transferred (Kirkpatrick 2016, 1, xi–xv, 5–6).

Studies about the impact analysis of two partially similar ePortfolio projects were also benchmarked with a special focus on the methods used to evaluate the impact. Firstly, the EUfolio, international EU classroom ePortfolio project related to developing ePortfolios in lower secondary schools, highlighted that ePortfolios can be introduced to an educational system to be a tool for documenting and assessing competence-oriented learning. This had an impact on the teachers’ learning design process, while students’ engagement and motivation received a greater emphasis (Ghoneim et al. 2016, 58). Secondly, Connect to Learning (C2L) project, aimed to find out what difference ePortfolios can make in HE. To answer the question three propositions were made: (1) ePortfolio initiatives advance student success; (2) Making student learning visible, ePortfolio initiatives support reflection, social pedagogy, and deep learning and (3) ePortfolio initiatives catalyse learning centred institutional change. The impact evaluation proved the propositions to be true and indicated that the HEIs can become real “learning colleges”, where everyone and the college itself are learners. (Eynon, Gambino & Török 2014, 95–96, 108) This benchmarking encouraged us to use propositions as a reflection frame and consider unexpected meta-level impacts as important outcomes of the EEP project.

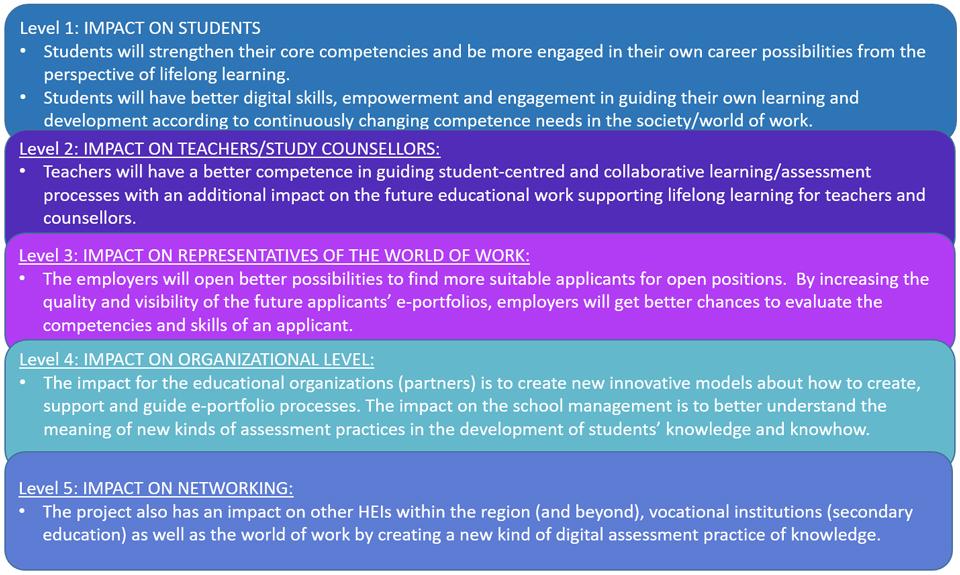

To be able to cover the impacts as comprehensive as possible, the EEP impact evaluation model was developed to analyse the impact of the project on the five levels of: students, teachers and study counsellors, representatives from the world of work, organizations and networks. As in the analysis of the C2L project (Eynon et al. 9–96), the EEP impact evaluation model used shortened propositions outlined in the Erasmus+ Application form (Laurikainen 2016, 63–64). Figure 1 presents the propositions related to expected impacts in the five analysis levels.

The impact evaluation aimed at finding out the extent the above propositions became true based on qualitative theme interviews with open-ended questions. These propositions were used in the interviews of partners and in analysing the relevant data collected from previous publications of the project. The focus was on qualitative changes and experiences from the perspectives of practitioners (see Heikkinen et al. 2016). Quantitative impacts will be documented to the project final report according to the instructions of the funding agency. Quantitative indicators were, for example, how many persons were involved, how many articles were published or how many workshops were organized during the project, which would demonstrate the dissemination of the project outcomes. Our focus was on qualitative impacts.

In order to increase the verifiability of the evaluation, i.e. to help the experts ponder about the evidence impact with relevant indicators, a brainstorming sheet in the form of a table and based on the five levels was sent to the interviewees a few days before the interviews. The idea of the sheet was influenced by the Erasmus+ Impact Tool (The Erasmus+ UK National Agency 2016, 12‒13). It was also used to increase dialogic validity (Anderson & Herr 1999). The interviewees were asked to reflect on impacts that they experienced during the implementation of the project, while they had a chance to collect some possible indicators to evidence the impact. The sheet also contained the five propositions so that the interviewees could contemplate whether the aspects described in them were realised. The qualitative impact evaluation model is outlined in Figure 2 and the other procedures explained in the following chapters.

Implementation of impact evaluation

In April 2018, the first author of this article conducted seven themed interviews with eleven developers of the project working in the six partner institutions. The interviewees were from Marino Institute of Education (Ireland), VIA University College (Denmark), Katholieke Universiteit Leuven (Belgium), UC Leuven-Limburg (Belgium), Instituto Politécnico de Setúbal (Portugal), and Häme University of Applied Sciences (Finland) bachelor level education and professional teacher education. One interview was conducted in person and six online. The interviews lasted 40–75 minutes and were recorded. In accordance with the evaluation model and the brainstorming sheet, the interviews consisted of five questions designed to reveal the impact on the five analysis levels. Apart from the five major questions, assisting questions were occasionally used to clarify certain issues and help further reflection. The educational context of each partner was different, and their experience and expertise in developing ePortfolio practices varied, so it was important for the interviewer to assure the understanding. The experts provided evidence for the EEP project generating impact at the five levels in various ways: through personal, organizational or peer-experiences, narratives, case studies and other indicators. The interviews were transcribed in May and June 2018.

The interview data was content analysed (see Krippendorff 2004) in July, August and September 2018 by the first author. In addition, relevant expert insights provided in the previous article collections of the EEP project were combined to the impact evaluation analyses. The five levels provided a structure for data analysis. All relevant data were collected around the five levels, grouping all relevant impact references around a frame at each level. The frame consisted of the following subdivisions: 1) expected impacts; 2) unexpected impacts; 3) challenges 4) ideas for improvement. The first part i.e. expected impacts were based on the propositions. Unexpected impacts included all relevant impacts that were not predicted by the propositions. Challenges comprised various problems, difficulties, hardships that partners observed. Ideas for improvement consisted of experts’ proposals to overcome challenges or to further enhance processes that they experienced to be successful. Partners’ analyses of students’, employers’ and teachers’/HEI’s perspectives in the publications were also considered and grouped around the five levels, following the same subdivisions at each level.

In addition, the first author drew similarities and differences and narrowed the data to select the parts that best described and evidenced the impacts of the EEP project. Partners’ analyses were referenced in brief at each level, followed by the impacts revealed in the interviews. Sometimes impacts outlined in the partners’ analyses were repeated in the interviews. If partners revealed new data or presented a different perspective to the issue, these impacts were also referred to in the evaluation articles. The second author provided the practitioner’s and coordinator’s perspective to the analysis, by commenting on the analysis and participating in writing EEP impact articles 2 and 3.

General findings related to the impact of EEP

The detailed findings of impacts at each level are presented in EEP impacts 2 article (levels 1–3) and EEP impacts 3 article (levels 4–5). In these articles, we want to share some general observations related to impact evaluation of EEP project from different stakeholder perspectives of the ePortfolio process. During the lifespan of EEP, partners have felt that they have shared a similar approach to student-centred education emphasizing students’ autonomy and responsibility. Accordingly, in impact evaluation, many of the experienced impacts were aligned with each other and all the partners have gained clear improvements following the proposed impacts (see Figure 1) of the project.

From the perspective of the ePortfolio process actors (students, teachers, employers), the findings are quite well aligned with the expected impacts. For example, students developed competences such as digital and transversal skills, and their learning through peer collaboration and their professional development was supported. However, some resistance to change was evident, and digital confidence and privacy issues were experienced as challenges, as well as an insufficient amount of institutional support or guidance. Incidentally, the main impact on teachers was the development of competences in guiding student-centred and collaborative learning, which also contributed to their lifelong learning and collaboration with colleagues. The challenges for teachers follow somewhat the challenges students were facing, i.e. insufficient digital competences and support, as well as time management issues. Through the cooperation with higher education institutions, employers have been introduced to ePortfolios and they have found out that ePortfolios could serve as showcases to demonstrate evidence of professional experience and competences as well as personal strengths and interests. Thus, with ePortfolios making applicants’ competencies and skills more visible the evaluation becomes easier for employers. As an unexpected impact, employers also discovered that ePortfolios can function as a tool for continuous professional development of staff. However, the impacts on employers are hard to measure at this stage; it takes much longer to see the impacts at this level.

From the perspective of higher education institutions (HEIs) and wider European higher education context through networking activities, it is evident that certain conditions are necessary to enable this kind of development with ePortfolios. During the project, partner organizations have shaped new innovative models to create, support and guide ePortfolio processes as described in the expected impacts. In addition, more cooperation and dissemination amongst the education community was reported and thus, the understanding and support of managerial level increased as well. Indeed, it was found important that the ePortfolio process would be in line with management targets and decisions. However, the challenges were connected to the lack of proper conditions for change, improving the pedagogical practices, integrating ePortfolio into the curriculum, improving the training context, strategies and rethinking teachers’ training models. Regarding the professional network around this topic, all the partners have found it having a far-reaching impact on the future. Through different events, the project has had an impact on other HEIs regionally and globally and through various channels, it influenced the world of work, as expected. Further, the publications on different stakeholders’ perspectives can widen the reach of the impact in professional networks. In addition, the partners have highlighted the well-functioning partnership within the project and building on the common understanding of ePortfolios that has led to several local, national and international initiatives for further development.

Discussion

The European Union framework sets several targets for higher education in Europe and the main target is to diminish the supply gap between the needs of the world of work and the skills and competences from the graduates. The Modernization of Higher Education strategy (European Commission 2011) focuses on promoting pedagogical quality of teaching, the labour market relevance of higher education as well as innovation and knowledge triangle through closer links between higher education, research and innovation with the world of work. In addition, the European Employment Strategy – Employment guidelines (European Commission 2017) aims to diminish the gap between labour and skills supply by addressing structural weaknesses in education and the Digital Agenda for Europe (European Commission 2014) contributes to this especially by promoting the right skills for the modern digital economy. The role of EEP was to address these targets through the improvement of the ePortfolio process in higher education; the improved assessment and guidance practices, enhanced digital competences of students and the increased connections to the world of work.

In order to reflect these strategic objectives to the results of the EEP project, this impact evaluation was implemented. The benefits of impact evaluation are indisputable. When development practices are closely integrated to your own working context, sometimes it is difficult to recognize what has really changed during the project. That is why partners welcomed the impact evaluation procedure and were eager to cooperate in the process. Interviewees found that the structure of the interviews, the brainstorming sheet and the procedure were straightforward and to the point, which helped them contemplate about the impact that happened on the abovementioned five levels. All the experts were helpful and provided invaluable insights into the topics investigated in the EEP evaluation model. In addition, during the consultation of the funding agency, Finnish National Agency for Education EDUFI, the plan for the impact evaluation was presented and they were keen to read about the results. Furthermore, the first author of the article felt that the five evaluation levels constituted a logical structure all through the impact evaluation process. The five open-ended questions formulated around the impact evaluation levels served an easy to follow frame for the interviewees and the interviewer. As the experts could contemplate on the impact that happened at the five levels using their brainstorming sheets, they provided rich data and evidence for demonstrating impact. The latter evidence served as indicators to increase the verifiability of impacts.

According to the partners, EEP projects have had an influence in many ways and at many levels. The experts involved in the project have utilized many channels to disseminate the outcomes of the project. However, the networks and working conditions were not exactly the same between partners. Some of the partners worked in positions where they had better possibilities to share the new knowledge and understanding, namely as organisational developers or as managers, whereas some of the experts worked as teachers, focusing more in improving the ePortfolio practices in their own work. The project consortium recognizes that the standpoints and the findings in this impact evaluation are limited in size and coverage, and do not represent the whole situation in Europe as such, but the diversified realities of the partners have enabled different kinds of perspectives. These perspectives have been analysed and combined to common results and impacts by an external evaluator, and afterwards common European level recommendations are made (see Kunnari & Laurikainen 2018).

This article was produced in the Erasmus+ (KA2 action) funded project “Empowering Eportfolio Process (EEP)”. The beneficiary in the project is Häme University of Applied Sciences (FI) and the partners are VIA University College (DK), Katholieke Universiteit KU Leuven (BE), University College Leuven-Limburg (BE), Polytechnic Institute of Setúbal (PT) and Marino Institute of Education (IE). The project was implementated during 1.9.2016–30.11.2018.

Authors

Annamária Erdei, MBA Student at HAMK’s Business Management and Entrepreneurship programme. During her career she has worked as an analyst and language teacher.

Irma Kunnari, M.Ed. (PhD Fellow in Educational Psychology) Principal lecturer, pedagogical developer and teacher educator in Häme university of applied sciences, School of Professional Teacher Education. She currently works as a project manager in Empowering Eportfolio Process (EEP) project, and has a broad experience in developing higher education and the relationships between HE institutions and the work field.

Marja Laurikainen, MBA, Project coordinator, Empowering Eportfolio Process – Education Development Specialist (Global Education). She currently works in global education services designing and coordinating tailored education programmes, creating cooperation and research networks with regional experts and companies.

References

Anderson, G. L. & Herr, K. (1999). The new paradigm wars: is there room for rigorous practitioner knowledge in schools and universities? Educational Researcher, 28, 12–21.

Barrett, H. (2010). Balancing the Two Faces of ePortfolios. Educação, Formação & Tecnologias (Maio, 2010), 3(1), 6–14. Retrieved 12 December 2017 from http://eft.educom.pt/index.php/eft/article/viewFile/161/102

The Erasmus+ UK National Agency. (2016). Erasmus+ Impact+Tool. Retrieved 10th February 2018 from https://www.erasmusplus.org.uk/impact-assessment-resources

European Commission (2011). Supporting growth and jobs – an agenda for the modernisation of Europe’s higher education systems. Retrieved 26 September 2018 from https://eur-lex.europa.eu/legal-content/EN/TXT/PDF/?uri=CELEX:52011DC0567&from=en

European Commission (2014). The EU explained: Digital agenda for Europe. Retrieved 26 September 2018 from https://eige.europa.eu/resources/digital_agenda_en.pdf

European Commission (2017). European employment strategy – Employment guidelines. Retrieved 26 September 2018 from http://ec.europa.eu/social/main.jsp?catId=101&intPageId=3427

Eynon, B., Gambino, L. M., & Török J. (2014). What Difference Can ePortfolio Make? A Field Report from the Connect to Learning Project. International Journal of ePortfolio, 4(1), 95–114.

Ghoneim, A. & Ertl, B. (2016). Implementation of ePortfolios in Lower Secondary Schools. Experiences in the Framework of the Project EUfolio. EU classroom ePortfolios with a Focus on Teacher Training. Reflecting Education, 10(1), 55‒69.

Heikkinen, H. L., de Jong, F. P., & Vanderlinde, R. (2016). What is (good) practitioner research? Vocations and Learning, 9(1), 1‒19.

Krippendorff, K. (2004). Content analysis: An introduction to its methodology. Sage Publications.

Kunnari, I. (ed.) (2018). Higher education perspectives on ePortfolios. HAMK Unlimited. [In process, will be available at https://unlimited.hamk.fi/higher-education-perspectives-on-eportfolios]

Kunnari, I. & Laurikainen, M. (eds.) (2017a). Collection on Engaging practices on ePorfolio Process. Hämeenlinna, HAMK. Retrieved 4 October 2018 from https://drive.google.com/file/d/0BxEnFq7yUumMUGV2V2VxVmNaNFU/view

Kunnari, I. & Laurikainen, M. (eds.) (2017b). Students’ perspectives on ePortfolios. HAMK Unlimited. Retrieved 15 September 2018 from https://unlimited.hamk.fi/students-perspectives-on-eportfolios/

Kunnari, I. & Laurikainen, M. (2018). Fifteen recommendations on meaningful use of ePortfolios in Higher Education. In I. Kunnari & M. Laurikainen (eds.) Empowering ePortfolio Process. HAMK Unlimited Journal. [In process, will be available at https://unlimited.hamk.fi/ammatillinen-osaaminen-ja-opetus/fifteen-recommendations]

Laurikainen, M. (2016). Application Form. Call: 2016 KA” – Cooperation for Innovation and the Exchange of Good Practices. Erasmus+. Strategic Partnership for higher education.

Laurikainen, M. & Kunnari, I. (eds.) (2018). Employers’ perspectives on ePortfolios. Retrieved 15 September 2018 from https://unlimited.hamk.fi/employers-perspectives-on-eportfolios/

Nuutinen, A., Mälkki, A., Huutoniemi, K., & Törnroos, J. (2016). Tieteen tila 2016. Suomen Akatemia. Erweko.

Rasimus, J., (2011). Loogisen viitekehyksen lähestymistapa (LFA). Presentation. Retrieved 3 October 2017 http://www.cimo.fi/instancedata/prime_product_julkaisu/cimo/embeds/cimowwwstructure/22213_NorthSouthSouth_2011_LFA_koulutus.pdf

Tykkyläinen, S. (2017). Vaikuttava hanke – työkaluja vaikutusten arviointiin ja viestintään. Erasmus+ ja KA2 hankkeiden aloituskoulutus, CIMO. Presentation.